Contributing to EUREC⁴A-MIP#

To ease the comparison of the individual contributions and make the contributions accessible among collaborators and the broader audience, the simulation output from the EUREC⁴A-Model intercomparison will be made available on the DKRZ SWIFT storage. To use this storage efficiently and embed it within the analysis workflow the output needs to be prepared.

Overview#

Creation of dataset

merge output into reasonable pieces (concat along time dimension)

add attributes as necessary to describe variables

ensure CF-conform time variable

remove duplicate entries

Generation of zarr files:

resonable chunks (depending on typical access pattern)

resonable chunk size (about 10MB)

usage of

/asdimension_separator

Upload files:

either write zarr-files directly to swift-storage at DKRZ

or create the zarr-files locally and upload them afterwards to the swift-storage

or create tar-files, transfer to DKRZ disk space and then upload to the swift-storage

Creation#

The structure of zarr-files is fairly simple (specifications) and can, in general, be created with any software. The easiest way to create zarr files is, however, in the python environment, especially with the xarray package:

import xarray as xr

ds = xr.open_mfdataset("output_*.nc")

ds.to_zarr("output.zarr")

A few things should be noted to create performant datasets:

resonable chunks (depending on typical access pattern)

the shape of the chunks i.e. how the data is split in time and space should allow for reasonable performance for all typical access patterns. To do so, the chunks should contain data along all directions, especially along space and time to enable both fast analysis of temporal timeseries at a single point in space as well as plotting the entire domain as once at a particular time.

resonable chunk size (about 10MB)

the size of the individual chunks should be about 10MB. This is a good size to make requests over the internet efficient. Decisive is the size of the compressed chunks and not the size after decompression.

usage of

/asdimension_separator(for Zarr <= v2)Zarr files following version spec 1 and 2 are generally containing one subfolder per variable that contain all chunks of that variable. To reduce the pressure on the filesystem, the

dimension_separator="/"creates more subfolders such that each folder indexes only a fraction of all files./is used by default in Zarr v3, and cannot be changed.

merge output into reasonable pieces (concat along time dimension)

Because the zarr-files are chunked and only those portions are loaded into memory that are actually needed at the time of calculation, huge datasets can be created without loosing performance. The user benefits from this approach because with a single load statement a lot of datasets are available. For the proposed output variables, this means, that for each category (“2D Surface and TOA”, “2D Integrated Variables”, “2D fields at specified levels”, “3D fields”) only one dataset (zarr-file) should be created. If the temporal or spatial resolution differs for some variables, these variables should be written to an additional dataset. It should be avoided to have two time/height coordinates per dataset (i.e. there should only be one time coordinate

timeand notime2)A dataset should always contain all timesteps from the beginning of the simulation until its end.

rename the variable names to the ones given in the specific documents (LES, SRM)

Having consistent variable names across models significantly increases the user experience when analysing and comparing the output

ds = ds.rename({"model_var1":"eurec4amip_var1", "model_var2":"eurec4amip_var2"})

add attributes as necessary to describe variables

ds.u10.attrs["standard_name"] = "eastward_wind" ds.u10.attrs["units"] = "ms-1"

ensure CF-conform time variable The time unit should be CF-conform such that it is easily understood by common tools. In particular, the following should work:

ds.sel(time=slice("2020-01-20 00:00:00", "2020-02-01 00:00:00"))

remove duplicate entries Please check the output for any duplicate timesteps and check that the time is monotonic increasing

ds = ds.drop_duplicates(dim="time")

Upload#

zarr-files are great to upload to object stores like the swift-storage at DKRZ or Azure Blobs, Google Buckets and Amazon S3. Due to their structure, every single chunk is saved as a separate object. On a file storage system, currently the standard on HPC systems for climate simulations, these chunks are the individual files within a zarr directory:

tree eraint_uvz.zarr/

eraint_uvz.zarr/

|-- latitude

| `-- 0

|-- level

| `-- 0

|-- longitude

| `-- 0

|-- month

| `-- 0

|-- u

| |-- 0

| | |-- 0

| | | |-- 0

| | | | `-- 0

| | | `-- 1

| | | `-- 0

| | `-- 1

| | |-- 0

| | | `-- 0

| | `-- 1

| | `-- 0

| `-- 1

| |-- 0

These can be directly written to the DKRZ swift-storage during creation (1), after creating the file locally (2) or transferred to the DKRZ’s file storage on traditional ways via ssh or ftp (3) from where they need to be uploaded via (2) to the SWIFT storage.

Writing directly to the swift-storage

This approach requires a zarr version later than 3.1.3 due to a bug in how zarr accesses SWIFT storage (which has been fixed but not yet released as of October 2025).

import zarr

import xarray as xr

async def get_client(**kwargs):

import aiohttp

import aiohttp_retry

retry_options = aiohttp_retry.ExponentialRetry(

attempts=3,

exceptions={OSError, aiohttp.ServerDisconnectedError})

retry_client = aiohttp_retry.RetryClient(raise_for_status=False, retry_options=retry_options)

return retry_client

ds = xr.tutorial.load_dataset("eraint_uvz") # This is just an example dataset

store = zarr.storage.FSStore("output.zarr", get_client=get_client)

ds.to_zarr(store)

Writing the zarr file locally and upload after creation

2.1 Writing dataset to disk

import zarr import xarray as xr ds = xr.tutorial.load_dataset("eraint_uvz") # This is just an example dataset store = zarr.DirectoryStore("output.zarr") ds.to_zarr(store)

2.2 Uploading file to the DKRZ SWIFT storage

The upload can be performed with python-swiftclient which can be installed via

pip install python-swiftclient.To upload the zarr file to swift, an access token needs to be generated first, with e.g. the swift-token.py provided by DKRZ:

python swift-token.py new # Account: bm1349 # Username: <DKRZ-USERNAME> # Password: <YOUR-DKRZ-PASSWORD>

This stores the token in ~/.swiftenv which should look like:

#token expires on: Wed 08. Oct 10:38:35 CEST 2025 setenv OS_AUTH_TOKEN dkrz_<TOKEN> setenv OS_STORAGE_URL https://swift.dkrz.de/v1/dkrz_<STORAGEURL> setenv OS_AUTH_URL " " setenv OS_USERNAME " " setenv OS_PASSWORD " "

The

OS_AUTH_TOKENandOS_STORAGE_URLcan also be directly requested with:curl -I -X GET https://swift.dkrz.de/auth/v1.0 -H "x-auth-user: <GROUP>:<USERNAME>" -H 'x-auth-key: <PASSWORD>' > ~/.swiftenv

note that the

~/.swiftenvfile needs to be edited afterwards. Alternatively the swift client can be used:swift auth -A=https://swift.dkrz.de/auth/v1.0 -U <GROUP>:<USERNAME>" -K <PASSWORD>" > ~/.swiftenv

where group is

bm1349for EUREC4A-MIP, instead of theswift-token.pyhelper script.The token has to be activated with the following command each time a new terminal is opened:

source ~/.swiftenv

Please contact Hauke Schulz if you don’t have a DKRZ account and otherwise let him know about your username so that it can be added to the project.

The upload itself is performed by

swift upload -c <CONTAINER> <FILENAME>

where

<CONTAINER>should be set with a meaningful name describing the dataset<FILENAME>. Examples areICON_LES_control_312m,HARMONIE_SRM_warming_624mor in general following the schema<model>_<setup>_<experiment>_<resolution>. The filename can follow the same principle and just add_<subset>e.g.ICON_LES_control_312m_3DorHARMONIE_SRM_warming_624m_2D. In general, the container and filenames are not of importance because the files will be referenced through the EUREC4A intake catalog where they will get a meaningful name. Nevertheless, for users who want to download the datasets themselves a bit of structure is nice.Note

Please create a new container for each larger dataset (large meaning many files (>1000)). This makes it much easier to delete false datasets, because containers can be much more efficiently deleted on the object store than individual files.

Creating zarr files locally and transfer them to DKRZ’s filestore via ssh/ftp

To transfer zarr files via ftp or ssh, it is advisable to pack zarr files into larger quantities of several GB. If a single tar would be too large and the content shall be distributed across several tar files, it is beneficial to create tar files that are closed in itself, i.e. the data is not split mid-record. This step is needed to allow opening tar-ed zarr files directly without the need of unpacking them.

Note

Compression via zip/gzip is not needed and should be avoided. The data in the zarr files is already compressed and additional compression is not expected to reduce the data amount significantly.

Zarr example with chunking and compression#

A sample script for Zarr conversion showing how to set chunk sizes and turn on compression. Also attempts to control the memory usage of Dask. This script assumes Zarr version >= 3, and also writes the data in the Zarr 3 format.

import xarray as xr

import zarr

import dask

from dask.distributed import Client

import numpy as np

from numcodecs.zarr3 import Blosc

import swiftspec

# try to limit scratch disk usage dask.config.set({'distributed.worker.memory.max-spill': 100*1024**3})

# set limits on Dask RAM use - experimental

client = Client(processes=False,n_workers=1,threads_per_worker=4,memory_limit=100*1024**3)

filelist = ['input_file_1.nc', 'input_file_2.nc']

ds = xr.open_mfdataset(file_list, chunks={'time':1,'xm':1024,'xt':1024,'ym':1024,'yt':1024})

# chunks is a dictionary of chunk sizes. Should ideally be integer multiples of the

# chunk sizes of the input data

print('loading done')

print(ds)

print()

# optionally rename variables.

# mapping of variables - input name : output name variables = {

'twp' : 'prw',

'lwp' : 'clwvi',

'us' : 'u10m',

'vs' : 'v10m',

'Ts' : 't10m',

...

}

print('renaming variables')

ds = ds.rename(variables)

print(ds)

# In case dask did not obey the requested chunk sizes,

# re-chunk manually here

#ds = ds.chunk({'time' : time_chunk_size})

# Set output chunk sizes

# These chunk sizes should be integer fractions of the in-memory chunk sizes of ds

# and be chosen for 1) suitable chunk size, ~10MB when compressed

# and 2) performance when loading data with the expected access patterns

output_chunks = {

'time' : 1,

'xt' : xs,

'xm' : xs,

'yt' : ys,

'ym' : ys,

}

# This example has xt,xm and yt,ym here because DALES has a staggered grid, where some variables use

# the xt (cell center) coordinate and others use xm (cell border)

# Create an encoding dictionary which sets options for every variable.

# The chunk sizes are read from the output_chunks dictionary created above.

# zstd is a compression algorithm, which is fast and compresses well.

# The compression level is a trade-off between compression speed and

# the final size, higher level takes longer but results in a smaller file.

encoding = {name: {

'chunks' : [output_chunks[d] for d in var.dims],

'compressors' : [Blosc(cname='zstd', clevel=8)]

} for name,var in ds.items() }

# https with retry, from swiftspec docs.

async def get_client(**kwargs):

import aiohttp

import aiohttp_retry

retry_options = aiohttp_retry.ExponentialRetry(

attempts=3,

exceptions={OSError, aiohttp.ServerDisconnectedError})

retry_client = aiohttp_retry.RetryClient(raise_for_status=False, retry_options=retry_options)

return retry_client

# write directly to SWIFT

#store_url='swift://swift.dkrz.de/dkrz_xxxxx/CONTAINER/zarrname.zarr'

#ds.to_zarr(store_url, encoding=encoding, mode='w',

# zarr_format=3, storage_options={"get_client": get_client})

# write to local disk:

ds.to_zarr(zarr_dir, encoding=encoding, mode='w', zarr_format=3)

Indexing#

After the upload has been finished the output needs to be added to the EUREC⁴A-Intake catalog. Please open a Pull Request and follow the contribution guidelines. The maintainers will help with this process if needed.

Optionally, some description about the specific model run can be added to this webpage by opening a Pull Request.

Accessing the simulations#

The project is on-going and so far no contributions have been made available. The documentation of the ICON-LES and Cloud Botany with DALES simulations made apart from this intercomparison project give already a taste on how the access will be possible.

The EUREC⁴A-MIP output should be available in a similar matter and further combined by a datatree object.

Datatree object#

A datatree object can group several datasets into one larger object. It allows to apply operators on all datasets within such an object even in cases where the size of dimensions e.g. due to different resolutions do not agree. A blogpost from the developers shows the potential of these objects for simulation ensembles and their intercomparison. For EUREC⁴A-MIP the datatree could look as follows:

<xarray.DatasetView> Size: 0B

Dimensions: ()

Data variables:

*empty*- time: 8641

- height: 50

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 1 graph layer Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 2 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 35GB Dimensions: (time: 8641, height: 50, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 * height (height) int64 400B 0 200 400 600 800 ... 9000 9200 9400 9600 9800 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray> u (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray>3D- time: 8641

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 3 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 4 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 691MB Dimensions: (time: 8641, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray> u_10m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*DALES- time: 8641

- height: 50

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 1 graph layer Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 2 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 35GB Dimensions: (time: 8641, height: 50, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 * height (height) int64 400B 0 200 400 600 800 ... 9000 9200 9400 9600 9800 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray> u (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray>3D- time: 8641

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 3 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 4 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 691MB Dimensions: (time: 8641, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray> u_10m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*ICON

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*LES- time: 8641

- height: 50

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 1 graph layer Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 2 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 35GB Dimensions: (time: 8641, height: 50, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 * height (height) int64 400B 0 200 400 600 800 ... 9000 9200 9400 9600 9800 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray> u (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray>3D- time: 8641

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 3 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 4 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 691MB Dimensions: (time: 8641, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray> u_10m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*DALES- time: 200

- height: 17

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-01-02T09:10:00

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', '2020-01-01T00:30:00.000000000', '2020-01-01T00:40:00.000000000', '2020-01-01T00:50:00.000000000', '2020-01-01T01:00:00.000000000', '2020-01-01T01:10:00.000000000', '2020-01-01T01:20:00.000000000', '2020-01-01T01:30:00.000000000', '2020-01-01T01:40:00.000000000', '2020-01-01T01:50:00.000000000', '2020-01-01T02:00:00.000000000', '2020-01-01T02:10:00.000000000', '2020-01-01T02:20:00.000000000', '2020-01-01T02:30:00.000000000', '2020-01-01T02:40:00.000000000', '2020-01-01T02:50:00.000000000', '2020-01-01T03:00:00.000000000', '2020-01-01T03:10:00.000000000', '2020-01-01T03:20:00.000000000', '2020-01-01T03:30:00.000000000', '2020-01-01T03:40:00.000000000', '2020-01-01T03:50:00.000000000', '2020-01-01T04:00:00.000000000', '2020-01-01T04:10:00.000000000', '2020-01-01T04:20:00.000000000', '2020-01-01T04:30:00.000000000', '2020-01-01T04:40:00.000000000', '2020-01-01T04:50:00.000000000', '2020-01-01T05:00:00.000000000', '2020-01-01T05:10:00.000000000', '2020-01-01T05:20:00.000000000', '2020-01-01T05:30:00.000000000', '2020-01-01T05:40:00.000000000', '2020-01-01T05:50:00.000000000', '2020-01-01T06:00:00.000000000', '2020-01-01T06:10:00.000000000', '2020-01-01T06:20:00.000000000', '2020-01-01T06:30:00.000000000', '2020-01-01T06:40:00.000000000', '2020-01-01T06:50:00.000000000', '2020-01-01T07:00:00.000000000', '2020-01-01T07:10:00.000000000', '2020-01-01T07:20:00.000000000', '2020-01-01T07:30:00.000000000', '2020-01-01T07:40:00.000000000', '2020-01-01T07:50:00.000000000', '2020-01-01T08:00:00.000000000', '2020-01-01T08:10:00.000000000', '2020-01-01T08:20:00.000000000', '2020-01-01T08:30:00.000000000', '2020-01-01T08:40:00.000000000', '2020-01-01T08:50:00.000000000', '2020-01-01T09:00:00.000000000', '2020-01-01T09:10:00.000000000', '2020-01-01T09:20:00.000000000', '2020-01-01T09:30:00.000000000', '2020-01-01T09:40:00.000000000', '2020-01-01T09:50:00.000000000', '2020-01-01T10:00:00.000000000', '2020-01-01T10:10:00.000000000', '2020-01-01T10:20:00.000000000', '2020-01-01T10:30:00.000000000', '2020-01-01T10:40:00.000000000', '2020-01-01T10:50:00.000000000', '2020-01-01T11:00:00.000000000', '2020-01-01T11:10:00.000000000', '2020-01-01T11:20:00.000000000', '2020-01-01T11:30:00.000000000', '2020-01-01T11:40:00.000000000', '2020-01-01T11:50:00.000000000', '2020-01-01T12:00:00.000000000', '2020-01-01T12:10:00.000000000', '2020-01-01T12:20:00.000000000', '2020-01-01T12:30:00.000000000', '2020-01-01T12:40:00.000000000', '2020-01-01T12:50:00.000000000', '2020-01-01T13:00:00.000000000', '2020-01-01T13:10:00.000000000', '2020-01-01T13:20:00.000000000', '2020-01-01T13:30:00.000000000', '2020-01-01T13:40:00.000000000', '2020-01-01T13:50:00.000000000', '2020-01-01T14:00:00.000000000', '2020-01-01T14:10:00.000000000', '2020-01-01T14:20:00.000000000', '2020-01-01T14:30:00.000000000', '2020-01-01T14:40:00.000000000', '2020-01-01T14:50:00.000000000', '2020-01-01T15:00:00.000000000', '2020-01-01T15:10:00.000000000', '2020-01-01T15:20:00.000000000', '2020-01-01T15:30:00.000000000', '2020-01-01T15:40:00.000000000', '2020-01-01T15:50:00.000000000', '2020-01-01T16:00:00.000000000', '2020-01-01T16:10:00.000000000', '2020-01-01T16:20:00.000000000', '2020-01-01T16:30:00.000000000', '2020-01-01T16:40:00.000000000', '2020-01-01T16:50:00.000000000', '2020-01-01T17:00:00.000000000', '2020-01-01T17:10:00.000000000', '2020-01-01T17:20:00.000000000', '2020-01-01T17:30:00.000000000', '2020-01-01T17:40:00.000000000', '2020-01-01T17:50:00.000000000', '2020-01-01T18:00:00.000000000', '2020-01-01T18:10:00.000000000', '2020-01-01T18:20:00.000000000', '2020-01-01T18:30:00.000000000', '2020-01-01T18:40:00.000000000', '2020-01-01T18:50:00.000000000', '2020-01-01T19:00:00.000000000', '2020-01-01T19:10:00.000000000', '2020-01-01T19:20:00.000000000', '2020-01-01T19:30:00.000000000', '2020-01-01T19:40:00.000000000', '2020-01-01T19:50:00.000000000', '2020-01-01T20:00:00.000000000', '2020-01-01T20:10:00.000000000', '2020-01-01T20:20:00.000000000', '2020-01-01T20:30:00.000000000', '2020-01-01T20:40:00.000000000', '2020-01-01T20:50:00.000000000', '2020-01-01T21:00:00.000000000', '2020-01-01T21:10:00.000000000', '2020-01-01T21:20:00.000000000', '2020-01-01T21:30:00.000000000', '2020-01-01T21:40:00.000000000', '2020-01-01T21:50:00.000000000', '2020-01-01T22:00:00.000000000', '2020-01-01T22:10:00.000000000', '2020-01-01T22:20:00.000000000', '2020-01-01T22:30:00.000000000', '2020-01-01T22:40:00.000000000', '2020-01-01T22:50:00.000000000', '2020-01-01T23:00:00.000000000', '2020-01-01T23:10:00.000000000', '2020-01-01T23:20:00.000000000', '2020-01-01T23:30:00.000000000', '2020-01-01T23:40:00.000000000', '2020-01-01T23:50:00.000000000', '2020-01-02T00:00:00.000000000', '2020-01-02T00:10:00.000000000', '2020-01-02T00:20:00.000000000', '2020-01-02T00:30:00.000000000', '2020-01-02T00:40:00.000000000', '2020-01-02T00:50:00.000000000', '2020-01-02T01:00:00.000000000', '2020-01-02T01:10:00.000000000', '2020-01-02T01:20:00.000000000', '2020-01-02T01:30:00.000000000', '2020-01-02T01:40:00.000000000', '2020-01-02T01:50:00.000000000', '2020-01-02T02:00:00.000000000', '2020-01-02T02:10:00.000000000', '2020-01-02T02:20:00.000000000', '2020-01-02T02:30:00.000000000', '2020-01-02T02:40:00.000000000', '2020-01-02T02:50:00.000000000', '2020-01-02T03:00:00.000000000', '2020-01-02T03:10:00.000000000', '2020-01-02T03:20:00.000000000', '2020-01-02T03:30:00.000000000', '2020-01-02T03:40:00.000000000', '2020-01-02T03:50:00.000000000', '2020-01-02T04:00:00.000000000', '2020-01-02T04:10:00.000000000', '2020-01-02T04:20:00.000000000', '2020-01-02T04:30:00.000000000', '2020-01-02T04:40:00.000000000', '2020-01-02T04:50:00.000000000', '2020-01-02T05:00:00.000000000', '2020-01-02T05:10:00.000000000', '2020-01-02T05:20:00.000000000', '2020-01-02T05:30:00.000000000', '2020-01-02T05:40:00.000000000', '2020-01-02T05:50:00.000000000', '2020-01-02T06:00:00.000000000', '2020-01-02T06:10:00.000000000', '2020-01-02T06:20:00.000000000', '2020-01-02T06:30:00.000000000', '2020-01-02T06:40:00.000000000', '2020-01-02T06:50:00.000000000', '2020-01-02T07:00:00.000000000', '2020-01-02T07:10:00.000000000', '2020-01-02T07:20:00.000000000', '2020-01-02T07:30:00.000000000', '2020-01-02T07:40:00.000000000', '2020-01-02T07:50:00.000000000', '2020-01-02T08:00:00.000000000', '2020-01-02T08:10:00.000000000', '2020-01-02T08:20:00.000000000', '2020-01-02T08:30:00.000000000', '2020-01-02T08:40:00.000000000', '2020-01-02T08:50:00.000000000', '2020-01-02T09:00:00.000000000', '2020-01-02T09:10:00.000000000'], dtype='datetime64[ns]') - height(height)int640 600 1200 1800 ... 8400 9000 9600

array([ 0, 600, 1200, 1800, 2400, 3000, 3600, 4200, 4800, 5400, 6000, 6600, 7200, 7800, 8400, 9000, 9600])

- t(time, height, cell)float64dask.array<chunksize=(200, 17, 579), meta=np.ndarray>

Array Chunk Bytes 129.70 MiB 15.02 MiB Shape (200, 17, 5000) (200, 17, 579) Dask graph 9 chunks in 2 graph layers Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(200, 17, 579), meta=np.ndarray>

Array Chunk Bytes 129.70 MiB 15.02 MiB Shape (200, 17, 5000) (200, 17, 579) Dask graph 9 chunks in 3 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 272MB Dimensions: (time: 200, height: 17, cell: 5000) Coordinates: * time (time) datetime64[ns] 2kB 2020-01-01 ... 2020-01-02T09:10:00 * height (height) int64 136B 0 600 1200 1800 2400 ... 7800 8400 9000 9600 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 136MB dask.array<chunksize=(200, 17, 579), meta=np.ndarray> u (time, height, cell) float64 136MB dask.array<chunksize=(200, 17, 579), meta=np.ndarray>3D- time: 200

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-01-02T09:10:00

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', '2020-01-01T00:30:00.000000000', '2020-01-01T00:40:00.000000000', '2020-01-01T00:50:00.000000000', '2020-01-01T01:00:00.000000000', '2020-01-01T01:10:00.000000000', '2020-01-01T01:20:00.000000000', '2020-01-01T01:30:00.000000000', '2020-01-01T01:40:00.000000000', '2020-01-01T01:50:00.000000000', '2020-01-01T02:00:00.000000000', '2020-01-01T02:10:00.000000000', '2020-01-01T02:20:00.000000000', '2020-01-01T02:30:00.000000000', '2020-01-01T02:40:00.000000000', '2020-01-01T02:50:00.000000000', '2020-01-01T03:00:00.000000000', '2020-01-01T03:10:00.000000000', '2020-01-01T03:20:00.000000000', '2020-01-01T03:30:00.000000000', '2020-01-01T03:40:00.000000000', '2020-01-01T03:50:00.000000000', '2020-01-01T04:00:00.000000000', '2020-01-01T04:10:00.000000000', '2020-01-01T04:20:00.000000000', '2020-01-01T04:30:00.000000000', '2020-01-01T04:40:00.000000000', '2020-01-01T04:50:00.000000000', '2020-01-01T05:00:00.000000000', '2020-01-01T05:10:00.000000000', '2020-01-01T05:20:00.000000000', '2020-01-01T05:30:00.000000000', '2020-01-01T05:40:00.000000000', '2020-01-01T05:50:00.000000000', '2020-01-01T06:00:00.000000000', '2020-01-01T06:10:00.000000000', '2020-01-01T06:20:00.000000000', '2020-01-01T06:30:00.000000000', '2020-01-01T06:40:00.000000000', '2020-01-01T06:50:00.000000000', '2020-01-01T07:00:00.000000000', '2020-01-01T07:10:00.000000000', '2020-01-01T07:20:00.000000000', '2020-01-01T07:30:00.000000000', '2020-01-01T07:40:00.000000000', '2020-01-01T07:50:00.000000000', '2020-01-01T08:00:00.000000000', '2020-01-01T08:10:00.000000000', '2020-01-01T08:20:00.000000000', '2020-01-01T08:30:00.000000000', '2020-01-01T08:40:00.000000000', '2020-01-01T08:50:00.000000000', '2020-01-01T09:00:00.000000000', '2020-01-01T09:10:00.000000000', '2020-01-01T09:20:00.000000000', '2020-01-01T09:30:00.000000000', '2020-01-01T09:40:00.000000000', '2020-01-01T09:50:00.000000000', '2020-01-01T10:00:00.000000000', '2020-01-01T10:10:00.000000000', '2020-01-01T10:20:00.000000000', '2020-01-01T10:30:00.000000000', '2020-01-01T10:40:00.000000000', '2020-01-01T10:50:00.000000000', '2020-01-01T11:00:00.000000000', '2020-01-01T11:10:00.000000000', '2020-01-01T11:20:00.000000000', '2020-01-01T11:30:00.000000000', '2020-01-01T11:40:00.000000000', '2020-01-01T11:50:00.000000000', '2020-01-01T12:00:00.000000000', '2020-01-01T12:10:00.000000000', '2020-01-01T12:20:00.000000000', '2020-01-01T12:30:00.000000000', '2020-01-01T12:40:00.000000000', '2020-01-01T12:50:00.000000000', '2020-01-01T13:00:00.000000000', '2020-01-01T13:10:00.000000000', '2020-01-01T13:20:00.000000000', '2020-01-01T13:30:00.000000000', '2020-01-01T13:40:00.000000000', '2020-01-01T13:50:00.000000000', '2020-01-01T14:00:00.000000000', '2020-01-01T14:10:00.000000000', '2020-01-01T14:20:00.000000000', '2020-01-01T14:30:00.000000000', '2020-01-01T14:40:00.000000000', '2020-01-01T14:50:00.000000000', '2020-01-01T15:00:00.000000000', '2020-01-01T15:10:00.000000000', '2020-01-01T15:20:00.000000000', '2020-01-01T15:30:00.000000000', '2020-01-01T15:40:00.000000000', '2020-01-01T15:50:00.000000000', '2020-01-01T16:00:00.000000000', '2020-01-01T16:10:00.000000000', '2020-01-01T16:20:00.000000000', '2020-01-01T16:30:00.000000000', '2020-01-01T16:40:00.000000000', '2020-01-01T16:50:00.000000000', '2020-01-01T17:00:00.000000000', '2020-01-01T17:10:00.000000000', '2020-01-01T17:20:00.000000000', '2020-01-01T17:30:00.000000000', '2020-01-01T17:40:00.000000000', '2020-01-01T17:50:00.000000000', '2020-01-01T18:00:00.000000000', '2020-01-01T18:10:00.000000000', '2020-01-01T18:20:00.000000000', '2020-01-01T18:30:00.000000000', '2020-01-01T18:40:00.000000000', '2020-01-01T18:50:00.000000000', '2020-01-01T19:00:00.000000000', '2020-01-01T19:10:00.000000000', '2020-01-01T19:20:00.000000000', '2020-01-01T19:30:00.000000000', '2020-01-01T19:40:00.000000000', '2020-01-01T19:50:00.000000000', '2020-01-01T20:00:00.000000000', '2020-01-01T20:10:00.000000000', '2020-01-01T20:20:00.000000000', '2020-01-01T20:30:00.000000000', '2020-01-01T20:40:00.000000000', '2020-01-01T20:50:00.000000000', '2020-01-01T21:00:00.000000000', '2020-01-01T21:10:00.000000000', '2020-01-01T21:20:00.000000000', '2020-01-01T21:30:00.000000000', '2020-01-01T21:40:00.000000000', '2020-01-01T21:50:00.000000000', '2020-01-01T22:00:00.000000000', '2020-01-01T22:10:00.000000000', '2020-01-01T22:20:00.000000000', '2020-01-01T22:30:00.000000000', '2020-01-01T22:40:00.000000000', '2020-01-01T22:50:00.000000000', '2020-01-01T23:00:00.000000000', '2020-01-01T23:10:00.000000000', '2020-01-01T23:20:00.000000000', '2020-01-01T23:30:00.000000000', '2020-01-01T23:40:00.000000000', '2020-01-01T23:50:00.000000000', '2020-01-02T00:00:00.000000000', '2020-01-02T00:10:00.000000000', '2020-01-02T00:20:00.000000000', '2020-01-02T00:30:00.000000000', '2020-01-02T00:40:00.000000000', '2020-01-02T00:50:00.000000000', '2020-01-02T01:00:00.000000000', '2020-01-02T01:10:00.000000000', '2020-01-02T01:20:00.000000000', '2020-01-02T01:30:00.000000000', '2020-01-02T01:40:00.000000000', '2020-01-02T01:50:00.000000000', '2020-01-02T02:00:00.000000000', '2020-01-02T02:10:00.000000000', '2020-01-02T02:20:00.000000000', '2020-01-02T02:30:00.000000000', '2020-01-02T02:40:00.000000000', '2020-01-02T02:50:00.000000000', '2020-01-02T03:00:00.000000000', '2020-01-02T03:10:00.000000000', '2020-01-02T03:20:00.000000000', '2020-01-02T03:30:00.000000000', '2020-01-02T03:40:00.000000000', '2020-01-02T03:50:00.000000000', '2020-01-02T04:00:00.000000000', '2020-01-02T04:10:00.000000000', '2020-01-02T04:20:00.000000000', '2020-01-02T04:30:00.000000000', '2020-01-02T04:40:00.000000000', '2020-01-02T04:50:00.000000000', '2020-01-02T05:00:00.000000000', '2020-01-02T05:10:00.000000000', '2020-01-02T05:20:00.000000000', '2020-01-02T05:30:00.000000000', '2020-01-02T05:40:00.000000000', '2020-01-02T05:50:00.000000000', '2020-01-02T06:00:00.000000000', '2020-01-02T06:10:00.000000000', '2020-01-02T06:20:00.000000000', '2020-01-02T06:30:00.000000000', '2020-01-02T06:40:00.000000000', '2020-01-02T06:50:00.000000000', '2020-01-02T07:00:00.000000000', '2020-01-02T07:10:00.000000000', '2020-01-02T07:20:00.000000000', '2020-01-02T07:30:00.000000000', '2020-01-02T07:40:00.000000000', '2020-01-02T07:50:00.000000000', '2020-01-02T08:00:00.000000000', '2020-01-02T08:10:00.000000000', '2020-01-02T08:20:00.000000000', '2020-01-02T08:30:00.000000000', '2020-01-02T08:40:00.000000000', '2020-01-02T08:50:00.000000000', '2020-01-02T09:00:00.000000000', '2020-01-02T09:10:00.000000000'], dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(200, 579), meta=np.ndarray>

Array Chunk Bytes 7.63 MiB 904.69 kiB Shape (200, 5000) (200, 579) Dask graph 9 chunks in 4 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(200, 579), meta=np.ndarray>

Array Chunk Bytes 7.63 MiB 904.69 kiB Shape (200, 5000) (200, 579) Dask graph 9 chunks in 5 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 16MB Dimensions: (time: 200, cell: 5000) Coordinates: * time (time) datetime64[ns] 2kB 2020-01-01 ... 2020-01-02T09:10:00 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 8MB dask.array<chunksize=(200, 579), meta=np.ndarray> u_10m (time, cell) float64 8MB dask.array<chunksize=(200, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*ICON- time: 200

- height: 50

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-01-02T09:10:00

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', '2020-01-01T00:30:00.000000000', '2020-01-01T00:40:00.000000000', '2020-01-01T00:50:00.000000000', '2020-01-01T01:00:00.000000000', '2020-01-01T01:10:00.000000000', '2020-01-01T01:20:00.000000000', '2020-01-01T01:30:00.000000000', '2020-01-01T01:40:00.000000000', '2020-01-01T01:50:00.000000000', '2020-01-01T02:00:00.000000000', '2020-01-01T02:10:00.000000000', '2020-01-01T02:20:00.000000000', '2020-01-01T02:30:00.000000000', '2020-01-01T02:40:00.000000000', '2020-01-01T02:50:00.000000000', '2020-01-01T03:00:00.000000000', '2020-01-01T03:10:00.000000000', '2020-01-01T03:20:00.000000000', '2020-01-01T03:30:00.000000000', '2020-01-01T03:40:00.000000000', '2020-01-01T03:50:00.000000000', '2020-01-01T04:00:00.000000000', '2020-01-01T04:10:00.000000000', '2020-01-01T04:20:00.000000000', '2020-01-01T04:30:00.000000000', '2020-01-01T04:40:00.000000000', '2020-01-01T04:50:00.000000000', '2020-01-01T05:00:00.000000000', '2020-01-01T05:10:00.000000000', '2020-01-01T05:20:00.000000000', '2020-01-01T05:30:00.000000000', '2020-01-01T05:40:00.000000000', '2020-01-01T05:50:00.000000000', '2020-01-01T06:00:00.000000000', '2020-01-01T06:10:00.000000000', '2020-01-01T06:20:00.000000000', '2020-01-01T06:30:00.000000000', '2020-01-01T06:40:00.000000000', '2020-01-01T06:50:00.000000000', '2020-01-01T07:00:00.000000000', '2020-01-01T07:10:00.000000000', '2020-01-01T07:20:00.000000000', '2020-01-01T07:30:00.000000000', '2020-01-01T07:40:00.000000000', '2020-01-01T07:50:00.000000000', '2020-01-01T08:00:00.000000000', '2020-01-01T08:10:00.000000000', '2020-01-01T08:20:00.000000000', '2020-01-01T08:30:00.000000000', '2020-01-01T08:40:00.000000000', '2020-01-01T08:50:00.000000000', '2020-01-01T09:00:00.000000000', '2020-01-01T09:10:00.000000000', '2020-01-01T09:20:00.000000000', '2020-01-01T09:30:00.000000000', '2020-01-01T09:40:00.000000000', '2020-01-01T09:50:00.000000000', '2020-01-01T10:00:00.000000000', '2020-01-01T10:10:00.000000000', '2020-01-01T10:20:00.000000000', '2020-01-01T10:30:00.000000000', '2020-01-01T10:40:00.000000000', '2020-01-01T10:50:00.000000000', '2020-01-01T11:00:00.000000000', '2020-01-01T11:10:00.000000000', '2020-01-01T11:20:00.000000000', '2020-01-01T11:30:00.000000000', '2020-01-01T11:40:00.000000000', '2020-01-01T11:50:00.000000000', '2020-01-01T12:00:00.000000000', '2020-01-01T12:10:00.000000000', '2020-01-01T12:20:00.000000000', '2020-01-01T12:30:00.000000000', '2020-01-01T12:40:00.000000000', '2020-01-01T12:50:00.000000000', '2020-01-01T13:00:00.000000000', '2020-01-01T13:10:00.000000000', '2020-01-01T13:20:00.000000000', '2020-01-01T13:30:00.000000000', '2020-01-01T13:40:00.000000000', '2020-01-01T13:50:00.000000000', '2020-01-01T14:00:00.000000000', '2020-01-01T14:10:00.000000000', '2020-01-01T14:20:00.000000000', '2020-01-01T14:30:00.000000000', '2020-01-01T14:40:00.000000000', '2020-01-01T14:50:00.000000000', '2020-01-01T15:00:00.000000000', '2020-01-01T15:10:00.000000000', '2020-01-01T15:20:00.000000000', '2020-01-01T15:30:00.000000000', '2020-01-01T15:40:00.000000000', '2020-01-01T15:50:00.000000000', '2020-01-01T16:00:00.000000000', '2020-01-01T16:10:00.000000000', '2020-01-01T16:20:00.000000000', '2020-01-01T16:30:00.000000000', '2020-01-01T16:40:00.000000000', '2020-01-01T16:50:00.000000000', '2020-01-01T17:00:00.000000000', '2020-01-01T17:10:00.000000000', '2020-01-01T17:20:00.000000000', '2020-01-01T17:30:00.000000000', '2020-01-01T17:40:00.000000000', '2020-01-01T17:50:00.000000000', '2020-01-01T18:00:00.000000000', '2020-01-01T18:10:00.000000000', '2020-01-01T18:20:00.000000000', '2020-01-01T18:30:00.000000000', '2020-01-01T18:40:00.000000000', '2020-01-01T18:50:00.000000000', '2020-01-01T19:00:00.000000000', '2020-01-01T19:10:00.000000000', '2020-01-01T19:20:00.000000000', '2020-01-01T19:30:00.000000000', '2020-01-01T19:40:00.000000000', '2020-01-01T19:50:00.000000000', '2020-01-01T20:00:00.000000000', '2020-01-01T20:10:00.000000000', '2020-01-01T20:20:00.000000000', '2020-01-01T20:30:00.000000000', '2020-01-01T20:40:00.000000000', '2020-01-01T20:50:00.000000000', '2020-01-01T21:00:00.000000000', '2020-01-01T21:10:00.000000000', '2020-01-01T21:20:00.000000000', '2020-01-01T21:30:00.000000000', '2020-01-01T21:40:00.000000000', '2020-01-01T21:50:00.000000000', '2020-01-01T22:00:00.000000000', '2020-01-01T22:10:00.000000000', '2020-01-01T22:20:00.000000000', '2020-01-01T22:30:00.000000000', '2020-01-01T22:40:00.000000000', '2020-01-01T22:50:00.000000000', '2020-01-01T23:00:00.000000000', '2020-01-01T23:10:00.000000000', '2020-01-01T23:20:00.000000000', '2020-01-01T23:30:00.000000000', '2020-01-01T23:40:00.000000000', '2020-01-01T23:50:00.000000000', '2020-01-02T00:00:00.000000000', '2020-01-02T00:10:00.000000000', '2020-01-02T00:20:00.000000000', '2020-01-02T00:30:00.000000000', '2020-01-02T00:40:00.000000000', '2020-01-02T00:50:00.000000000', '2020-01-02T01:00:00.000000000', '2020-01-02T01:10:00.000000000', '2020-01-02T01:20:00.000000000', '2020-01-02T01:30:00.000000000', '2020-01-02T01:40:00.000000000', '2020-01-02T01:50:00.000000000', '2020-01-02T02:00:00.000000000', '2020-01-02T02:10:00.000000000', '2020-01-02T02:20:00.000000000', '2020-01-02T02:30:00.000000000', '2020-01-02T02:40:00.000000000', '2020-01-02T02:50:00.000000000', '2020-01-02T03:00:00.000000000', '2020-01-02T03:10:00.000000000', '2020-01-02T03:20:00.000000000', '2020-01-02T03:30:00.000000000', '2020-01-02T03:40:00.000000000', '2020-01-02T03:50:00.000000000', '2020-01-02T04:00:00.000000000', '2020-01-02T04:10:00.000000000', '2020-01-02T04:20:00.000000000', '2020-01-02T04:30:00.000000000', '2020-01-02T04:40:00.000000000', '2020-01-02T04:50:00.000000000', '2020-01-02T05:00:00.000000000', '2020-01-02T05:10:00.000000000', '2020-01-02T05:20:00.000000000', '2020-01-02T05:30:00.000000000', '2020-01-02T05:40:00.000000000', '2020-01-02T05:50:00.000000000', '2020-01-02T06:00:00.000000000', '2020-01-02T06:10:00.000000000', '2020-01-02T06:20:00.000000000', '2020-01-02T06:30:00.000000000', '2020-01-02T06:40:00.000000000', '2020-01-02T06:50:00.000000000', '2020-01-02T07:00:00.000000000', '2020-01-02T07:10:00.000000000', '2020-01-02T07:20:00.000000000', '2020-01-02T07:30:00.000000000', '2020-01-02T07:40:00.000000000', '2020-01-02T07:50:00.000000000', '2020-01-02T08:00:00.000000000', '2020-01-02T08:10:00.000000000', '2020-01-02T08:20:00.000000000', '2020-01-02T08:30:00.000000000', '2020-01-02T08:40:00.000000000', '2020-01-02T08:50:00.000000000', '2020-01-02T09:00:00.000000000', '2020-01-02T09:10:00.000000000'], dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(200, 50, 579), meta=np.ndarray>

Array Chunk Bytes 381.47 MiB 44.17 MiB Shape (200, 50, 5000) (200, 50, 579) Dask graph 9 chunks in 2 graph layers Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(200, 50, 579), meta=np.ndarray>

Array Chunk Bytes 381.47 MiB 44.17 MiB Shape (200, 50, 5000) (200, 50, 579) Dask graph 9 chunks in 3 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 800MB Dimensions: (time: 200, height: 50, cell: 5000) Coordinates: * time (time) datetime64[ns] 2kB 2020-01-01 ... 2020-01-02T09:10:00 * height (height) int64 400B 0 200 400 600 800 ... 9000 9200 9400 9600 9800 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 400MB dask.array<chunksize=(200, 50, 579), meta=np.ndarray> u (time, height, cell) float64 400MB dask.array<chunksize=(200, 50, 579), meta=np.ndarray>3D- time: 200

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-01-02T09:10:00

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', '2020-01-01T00:30:00.000000000', '2020-01-01T00:40:00.000000000', '2020-01-01T00:50:00.000000000', '2020-01-01T01:00:00.000000000', '2020-01-01T01:10:00.000000000', '2020-01-01T01:20:00.000000000', '2020-01-01T01:30:00.000000000', '2020-01-01T01:40:00.000000000', '2020-01-01T01:50:00.000000000', '2020-01-01T02:00:00.000000000', '2020-01-01T02:10:00.000000000', '2020-01-01T02:20:00.000000000', '2020-01-01T02:30:00.000000000', '2020-01-01T02:40:00.000000000', '2020-01-01T02:50:00.000000000', '2020-01-01T03:00:00.000000000', '2020-01-01T03:10:00.000000000', '2020-01-01T03:20:00.000000000', '2020-01-01T03:30:00.000000000', '2020-01-01T03:40:00.000000000', '2020-01-01T03:50:00.000000000', '2020-01-01T04:00:00.000000000', '2020-01-01T04:10:00.000000000', '2020-01-01T04:20:00.000000000', '2020-01-01T04:30:00.000000000', '2020-01-01T04:40:00.000000000', '2020-01-01T04:50:00.000000000', '2020-01-01T05:00:00.000000000', '2020-01-01T05:10:00.000000000', '2020-01-01T05:20:00.000000000', '2020-01-01T05:30:00.000000000', '2020-01-01T05:40:00.000000000', '2020-01-01T05:50:00.000000000', '2020-01-01T06:00:00.000000000', '2020-01-01T06:10:00.000000000', '2020-01-01T06:20:00.000000000', '2020-01-01T06:30:00.000000000', '2020-01-01T06:40:00.000000000', '2020-01-01T06:50:00.000000000', '2020-01-01T07:00:00.000000000', '2020-01-01T07:10:00.000000000', '2020-01-01T07:20:00.000000000', '2020-01-01T07:30:00.000000000', '2020-01-01T07:40:00.000000000', '2020-01-01T07:50:00.000000000', '2020-01-01T08:00:00.000000000', '2020-01-01T08:10:00.000000000', '2020-01-01T08:20:00.000000000', '2020-01-01T08:30:00.000000000', '2020-01-01T08:40:00.000000000', '2020-01-01T08:50:00.000000000', '2020-01-01T09:00:00.000000000', '2020-01-01T09:10:00.000000000', '2020-01-01T09:20:00.000000000', '2020-01-01T09:30:00.000000000', '2020-01-01T09:40:00.000000000', '2020-01-01T09:50:00.000000000', '2020-01-01T10:00:00.000000000', '2020-01-01T10:10:00.000000000', '2020-01-01T10:20:00.000000000', '2020-01-01T10:30:00.000000000', '2020-01-01T10:40:00.000000000', '2020-01-01T10:50:00.000000000', '2020-01-01T11:00:00.000000000', '2020-01-01T11:10:00.000000000', '2020-01-01T11:20:00.000000000', '2020-01-01T11:30:00.000000000', '2020-01-01T11:40:00.000000000', '2020-01-01T11:50:00.000000000', '2020-01-01T12:00:00.000000000', '2020-01-01T12:10:00.000000000', '2020-01-01T12:20:00.000000000', '2020-01-01T12:30:00.000000000', '2020-01-01T12:40:00.000000000', '2020-01-01T12:50:00.000000000', '2020-01-01T13:00:00.000000000', '2020-01-01T13:10:00.000000000', '2020-01-01T13:20:00.000000000', '2020-01-01T13:30:00.000000000', '2020-01-01T13:40:00.000000000', '2020-01-01T13:50:00.000000000', '2020-01-01T14:00:00.000000000', '2020-01-01T14:10:00.000000000', '2020-01-01T14:20:00.000000000', '2020-01-01T14:30:00.000000000', '2020-01-01T14:40:00.000000000', '2020-01-01T14:50:00.000000000', '2020-01-01T15:00:00.000000000', '2020-01-01T15:10:00.000000000', '2020-01-01T15:20:00.000000000', '2020-01-01T15:30:00.000000000', '2020-01-01T15:40:00.000000000', '2020-01-01T15:50:00.000000000', '2020-01-01T16:00:00.000000000', '2020-01-01T16:10:00.000000000', '2020-01-01T16:20:00.000000000', '2020-01-01T16:30:00.000000000', '2020-01-01T16:40:00.000000000', '2020-01-01T16:50:00.000000000', '2020-01-01T17:00:00.000000000', '2020-01-01T17:10:00.000000000', '2020-01-01T17:20:00.000000000', '2020-01-01T17:30:00.000000000', '2020-01-01T17:40:00.000000000', '2020-01-01T17:50:00.000000000', '2020-01-01T18:00:00.000000000', '2020-01-01T18:10:00.000000000', '2020-01-01T18:20:00.000000000', '2020-01-01T18:30:00.000000000', '2020-01-01T18:40:00.000000000', '2020-01-01T18:50:00.000000000', '2020-01-01T19:00:00.000000000', '2020-01-01T19:10:00.000000000', '2020-01-01T19:20:00.000000000', '2020-01-01T19:30:00.000000000', '2020-01-01T19:40:00.000000000', '2020-01-01T19:50:00.000000000', '2020-01-01T20:00:00.000000000', '2020-01-01T20:10:00.000000000', '2020-01-01T20:20:00.000000000', '2020-01-01T20:30:00.000000000', '2020-01-01T20:40:00.000000000', '2020-01-01T20:50:00.000000000', '2020-01-01T21:00:00.000000000', '2020-01-01T21:10:00.000000000', '2020-01-01T21:20:00.000000000', '2020-01-01T21:30:00.000000000', '2020-01-01T21:40:00.000000000', '2020-01-01T21:50:00.000000000', '2020-01-01T22:00:00.000000000', '2020-01-01T22:10:00.000000000', '2020-01-01T22:20:00.000000000', '2020-01-01T22:30:00.000000000', '2020-01-01T22:40:00.000000000', '2020-01-01T22:50:00.000000000', '2020-01-01T23:00:00.000000000', '2020-01-01T23:10:00.000000000', '2020-01-01T23:20:00.000000000', '2020-01-01T23:30:00.000000000', '2020-01-01T23:40:00.000000000', '2020-01-01T23:50:00.000000000', '2020-01-02T00:00:00.000000000', '2020-01-02T00:10:00.000000000', '2020-01-02T00:20:00.000000000', '2020-01-02T00:30:00.000000000', '2020-01-02T00:40:00.000000000', '2020-01-02T00:50:00.000000000', '2020-01-02T01:00:00.000000000', '2020-01-02T01:10:00.000000000', '2020-01-02T01:20:00.000000000', '2020-01-02T01:30:00.000000000', '2020-01-02T01:40:00.000000000', '2020-01-02T01:50:00.000000000', '2020-01-02T02:00:00.000000000', '2020-01-02T02:10:00.000000000', '2020-01-02T02:20:00.000000000', '2020-01-02T02:30:00.000000000', '2020-01-02T02:40:00.000000000', '2020-01-02T02:50:00.000000000', '2020-01-02T03:00:00.000000000', '2020-01-02T03:10:00.000000000', '2020-01-02T03:20:00.000000000', '2020-01-02T03:30:00.000000000', '2020-01-02T03:40:00.000000000', '2020-01-02T03:50:00.000000000', '2020-01-02T04:00:00.000000000', '2020-01-02T04:10:00.000000000', '2020-01-02T04:20:00.000000000', '2020-01-02T04:30:00.000000000', '2020-01-02T04:40:00.000000000', '2020-01-02T04:50:00.000000000', '2020-01-02T05:00:00.000000000', '2020-01-02T05:10:00.000000000', '2020-01-02T05:20:00.000000000', '2020-01-02T05:30:00.000000000', '2020-01-02T05:40:00.000000000', '2020-01-02T05:50:00.000000000', '2020-01-02T06:00:00.000000000', '2020-01-02T06:10:00.000000000', '2020-01-02T06:20:00.000000000', '2020-01-02T06:30:00.000000000', '2020-01-02T06:40:00.000000000', '2020-01-02T06:50:00.000000000', '2020-01-02T07:00:00.000000000', '2020-01-02T07:10:00.000000000', '2020-01-02T07:20:00.000000000', '2020-01-02T07:30:00.000000000', '2020-01-02T07:40:00.000000000', '2020-01-02T07:50:00.000000000', '2020-01-02T08:00:00.000000000', '2020-01-02T08:10:00.000000000', '2020-01-02T08:20:00.000000000', '2020-01-02T08:30:00.000000000', '2020-01-02T08:40:00.000000000', '2020-01-02T08:50:00.000000000', '2020-01-02T09:00:00.000000000', '2020-01-02T09:10:00.000000000'], dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(200, 579), meta=np.ndarray>

Array Chunk Bytes 7.63 MiB 904.69 kiB Shape (200, 5000) (200, 579) Dask graph 9 chunks in 4 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(200, 579), meta=np.ndarray>

Array Chunk Bytes 7.63 MiB 904.69 kiB Shape (200, 5000) (200, 579) Dask graph 9 chunks in 5 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 16MB Dimensions: (time: 200, cell: 5000) Coordinates: * time (time) datetime64[ns] 2kB 2020-01-01 ... 2020-01-02T09:10:00 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 8MB dask.array<chunksize=(200, 579), meta=np.ndarray> u_10m (time, cell) float64 8MB dask.array<chunksize=(200, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*HARMONIE- time: 100

- height: 50

- cell: 5000

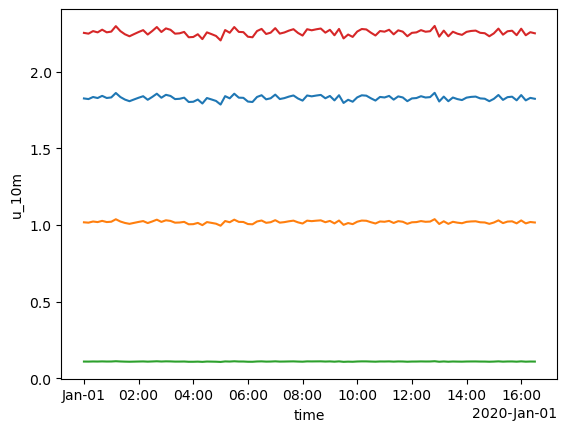

- time(time)datetime64[ns]2020-01-01 ... 2020-01-01T16:30:00

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', '2020-01-01T00:30:00.000000000', '2020-01-01T00:40:00.000000000', '2020-01-01T00:50:00.000000000', '2020-01-01T01:00:00.000000000', '2020-01-01T01:10:00.000000000', '2020-01-01T01:20:00.000000000', '2020-01-01T01:30:00.000000000', '2020-01-01T01:40:00.000000000', '2020-01-01T01:50:00.000000000', '2020-01-01T02:00:00.000000000', '2020-01-01T02:10:00.000000000', '2020-01-01T02:20:00.000000000', '2020-01-01T02:30:00.000000000', '2020-01-01T02:40:00.000000000', '2020-01-01T02:50:00.000000000', '2020-01-01T03:00:00.000000000', '2020-01-01T03:10:00.000000000', '2020-01-01T03:20:00.000000000', '2020-01-01T03:30:00.000000000', '2020-01-01T03:40:00.000000000', '2020-01-01T03:50:00.000000000', '2020-01-01T04:00:00.000000000', '2020-01-01T04:10:00.000000000', '2020-01-01T04:20:00.000000000', '2020-01-01T04:30:00.000000000', '2020-01-01T04:40:00.000000000', '2020-01-01T04:50:00.000000000', '2020-01-01T05:00:00.000000000', '2020-01-01T05:10:00.000000000', '2020-01-01T05:20:00.000000000', '2020-01-01T05:30:00.000000000', '2020-01-01T05:40:00.000000000', '2020-01-01T05:50:00.000000000', '2020-01-01T06:00:00.000000000', '2020-01-01T06:10:00.000000000', '2020-01-01T06:20:00.000000000', '2020-01-01T06:30:00.000000000', '2020-01-01T06:40:00.000000000', '2020-01-01T06:50:00.000000000', '2020-01-01T07:00:00.000000000', '2020-01-01T07:10:00.000000000', '2020-01-01T07:20:00.000000000', '2020-01-01T07:30:00.000000000', '2020-01-01T07:40:00.000000000', '2020-01-01T07:50:00.000000000', '2020-01-01T08:00:00.000000000', '2020-01-01T08:10:00.000000000', '2020-01-01T08:20:00.000000000', '2020-01-01T08:30:00.000000000', '2020-01-01T08:40:00.000000000', '2020-01-01T08:50:00.000000000', '2020-01-01T09:00:00.000000000', '2020-01-01T09:10:00.000000000', '2020-01-01T09:20:00.000000000', '2020-01-01T09:30:00.000000000', '2020-01-01T09:40:00.000000000', '2020-01-01T09:50:00.000000000', '2020-01-01T10:00:00.000000000', '2020-01-01T10:10:00.000000000', '2020-01-01T10:20:00.000000000', '2020-01-01T10:30:00.000000000', '2020-01-01T10:40:00.000000000', '2020-01-01T10:50:00.000000000', '2020-01-01T11:00:00.000000000', '2020-01-01T11:10:00.000000000', '2020-01-01T11:20:00.000000000', '2020-01-01T11:30:00.000000000', '2020-01-01T11:40:00.000000000', '2020-01-01T11:50:00.000000000', '2020-01-01T12:00:00.000000000', '2020-01-01T12:10:00.000000000', '2020-01-01T12:20:00.000000000', '2020-01-01T12:30:00.000000000', '2020-01-01T12:40:00.000000000', '2020-01-01T12:50:00.000000000', '2020-01-01T13:00:00.000000000', '2020-01-01T13:10:00.000000000', '2020-01-01T13:20:00.000000000', '2020-01-01T13:30:00.000000000', '2020-01-01T13:40:00.000000000', '2020-01-01T13:50:00.000000000', '2020-01-01T14:00:00.000000000', '2020-01-01T14:10:00.000000000', '2020-01-01T14:20:00.000000000', '2020-01-01T14:30:00.000000000', '2020-01-01T14:40:00.000000000', '2020-01-01T14:50:00.000000000', '2020-01-01T15:00:00.000000000', '2020-01-01T15:10:00.000000000', '2020-01-01T15:20:00.000000000', '2020-01-01T15:30:00.000000000', '2020-01-01T15:40:00.000000000', '2020-01-01T15:50:00.000000000', '2020-01-01T16:00:00.000000000', '2020-01-01T16:10:00.000000000', '2020-01-01T16:20:00.000000000', '2020-01-01T16:30:00.000000000'], dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(100, 50, 579), meta=np.ndarray>

Array Chunk Bytes 190.73 MiB 22.09 MiB Shape (100, 50, 5000) (100, 50, 579) Dask graph 9 chunks in 2 graph layers Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(100, 50, 579), meta=np.ndarray>

Array Chunk Bytes 190.73 MiB 22.09 MiB Shape (100, 50, 5000) (100, 50, 579) Dask graph 9 chunks in 3 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 400MB Dimensions: (time: 100, height: 50, cell: 5000) Coordinates: * time (time) datetime64[ns] 800B 2020-01-01 ... 2020-01-01T16:30:00 * height (height) int64 400B 0 200 400 600 800 ... 9000 9200 9400 9600 9800 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 200MB dask.array<chunksize=(100, 50, 579), meta=np.ndarray> u (time, height, cell) float64 200MB dask.array<chunksize=(100, 50, 579), meta=np.ndarray>3D- time: 100

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-01-01T16:30:00

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', '2020-01-01T00:30:00.000000000', '2020-01-01T00:40:00.000000000', '2020-01-01T00:50:00.000000000', '2020-01-01T01:00:00.000000000', '2020-01-01T01:10:00.000000000', '2020-01-01T01:20:00.000000000', '2020-01-01T01:30:00.000000000', '2020-01-01T01:40:00.000000000', '2020-01-01T01:50:00.000000000', '2020-01-01T02:00:00.000000000', '2020-01-01T02:10:00.000000000', '2020-01-01T02:20:00.000000000', '2020-01-01T02:30:00.000000000', '2020-01-01T02:40:00.000000000', '2020-01-01T02:50:00.000000000', '2020-01-01T03:00:00.000000000', '2020-01-01T03:10:00.000000000', '2020-01-01T03:20:00.000000000', '2020-01-01T03:30:00.000000000', '2020-01-01T03:40:00.000000000', '2020-01-01T03:50:00.000000000', '2020-01-01T04:00:00.000000000', '2020-01-01T04:10:00.000000000', '2020-01-01T04:20:00.000000000', '2020-01-01T04:30:00.000000000', '2020-01-01T04:40:00.000000000', '2020-01-01T04:50:00.000000000', '2020-01-01T05:00:00.000000000', '2020-01-01T05:10:00.000000000', '2020-01-01T05:20:00.000000000', '2020-01-01T05:30:00.000000000', '2020-01-01T05:40:00.000000000', '2020-01-01T05:50:00.000000000', '2020-01-01T06:00:00.000000000', '2020-01-01T06:10:00.000000000', '2020-01-01T06:20:00.000000000', '2020-01-01T06:30:00.000000000', '2020-01-01T06:40:00.000000000', '2020-01-01T06:50:00.000000000', '2020-01-01T07:00:00.000000000', '2020-01-01T07:10:00.000000000', '2020-01-01T07:20:00.000000000', '2020-01-01T07:30:00.000000000', '2020-01-01T07:40:00.000000000', '2020-01-01T07:50:00.000000000', '2020-01-01T08:00:00.000000000', '2020-01-01T08:10:00.000000000', '2020-01-01T08:20:00.000000000', '2020-01-01T08:30:00.000000000', '2020-01-01T08:40:00.000000000', '2020-01-01T08:50:00.000000000', '2020-01-01T09:00:00.000000000', '2020-01-01T09:10:00.000000000', '2020-01-01T09:20:00.000000000', '2020-01-01T09:30:00.000000000', '2020-01-01T09:40:00.000000000', '2020-01-01T09:50:00.000000000', '2020-01-01T10:00:00.000000000', '2020-01-01T10:10:00.000000000', '2020-01-01T10:20:00.000000000', '2020-01-01T10:30:00.000000000', '2020-01-01T10:40:00.000000000', '2020-01-01T10:50:00.000000000', '2020-01-01T11:00:00.000000000', '2020-01-01T11:10:00.000000000', '2020-01-01T11:20:00.000000000', '2020-01-01T11:30:00.000000000', '2020-01-01T11:40:00.000000000', '2020-01-01T11:50:00.000000000', '2020-01-01T12:00:00.000000000', '2020-01-01T12:10:00.000000000', '2020-01-01T12:20:00.000000000', '2020-01-01T12:30:00.000000000', '2020-01-01T12:40:00.000000000', '2020-01-01T12:50:00.000000000', '2020-01-01T13:00:00.000000000', '2020-01-01T13:10:00.000000000', '2020-01-01T13:20:00.000000000', '2020-01-01T13:30:00.000000000', '2020-01-01T13:40:00.000000000', '2020-01-01T13:50:00.000000000', '2020-01-01T14:00:00.000000000', '2020-01-01T14:10:00.000000000', '2020-01-01T14:20:00.000000000', '2020-01-01T14:30:00.000000000', '2020-01-01T14:40:00.000000000', '2020-01-01T14:50:00.000000000', '2020-01-01T15:00:00.000000000', '2020-01-01T15:10:00.000000000', '2020-01-01T15:20:00.000000000', '2020-01-01T15:30:00.000000000', '2020-01-01T15:40:00.000000000', '2020-01-01T15:50:00.000000000', '2020-01-01T16:00:00.000000000', '2020-01-01T16:10:00.000000000', '2020-01-01T16:20:00.000000000', '2020-01-01T16:30:00.000000000'], dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(100, 579), meta=np.ndarray>

Array Chunk Bytes 3.81 MiB 452.34 kiB Shape (100, 5000) (100, 579) Dask graph 9 chunks in 4 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(100, 579), meta=np.ndarray>

Array Chunk Bytes 3.81 MiB 452.34 kiB Shape (100, 5000) (100, 579) Dask graph 9 chunks in 5 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 8MB Dimensions: (time: 100, cell: 5000) Coordinates: * time (time) datetime64[ns] 800B 2020-01-01 ... 2020-01-01T16:30:00 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 4MB dask.array<chunksize=(100, 579), meta=np.ndarray> u_10m (time, cell) float64 4MB dask.array<chunksize=(100, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*AROME

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*SRM

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*control- time: 8641

- height: 50

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 1 graph layer Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 2 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 35GB Dimensions: (time: 8641, height: 50, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 * height (height) int64 400B 0 200 400 600 800 ... 9000 9200 9400 9600 9800 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray> u (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray>3D- time: 8641

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 3 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 4 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 691MB Dimensions: (time: 8641, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray> u_10m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*DALES- time: 8641

- height: 50

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 1 graph layer Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 2 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 35GB Dimensions: (time: 8641, height: 50, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 * height (height) int64 400B 0 200 400 600 800 ... 9000 9200 9400 9600 9800 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray> u (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray>3D- time: 8641

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 3 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 4 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 691MB Dimensions: (time: 8641, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray> u_10m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*ICON

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*LES- time: 8641

- height: 50

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 1 graph layer Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(579, 50, 579), meta=np.ndarray>

Array Chunk Bytes 16.10 GiB 127.88 MiB Shape (8641, 50, 5000) (579, 50, 579) Dask graph 135 chunks in 2 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 35GB Dimensions: (time: 8641, height: 50, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 * height (height) int64 400B 0 200 400 600 800 ... 9000 9200 9400 9600 9800 Dimensions without coordinates: cell Data variables: t (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray> u (time, height, cell) float64 17GB dask.array<chunksize=(579, 50, 579), meta=np.ndarray>3D- time: 8641

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-03-01

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', ..., '2020-02-29T23:40:00.000000000', '2020-02-29T23:50:00.000000000', '2020-03-01T00:00:00.000000000'], shape=(8641,), dtype='datetime64[ns]')

- t_2m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 3 graph layers Data type float64 numpy.ndarray - u_10m(time, cell)float64dask.array<chunksize=(579, 579), meta=np.ndarray>

Array Chunk Bytes 329.63 MiB 2.56 MiB Shape (8641, 5000) (579, 579) Dask graph 135 chunks in 4 graph layers Data type float64 numpy.ndarray

<xarray.DatasetView> Size: 691MB Dimensions: (time: 8641, cell: 5000) Coordinates: * time (time) datetime64[ns] 69kB 2020-01-01 ... 2020-03-01 Dimensions without coordinates: cell Data variables: t_2m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray> u_10m (time, cell) float64 346MB dask.array<chunksize=(579, 579), meta=np.ndarray>2D

<xarray.DatasetView> Size: 0B Dimensions: () Data variables: *empty*DALES- time: 200

- height: 50

- cell: 5000

- time(time)datetime64[ns]2020-01-01 ... 2020-01-02T09:10:00

array(['2020-01-01T00:00:00.000000000', '2020-01-01T00:10:00.000000000', '2020-01-01T00:20:00.000000000', '2020-01-01T00:30:00.000000000', '2020-01-01T00:40:00.000000000', '2020-01-01T00:50:00.000000000', '2020-01-01T01:00:00.000000000', '2020-01-01T01:10:00.000000000', '2020-01-01T01:20:00.000000000', '2020-01-01T01:30:00.000000000', '2020-01-01T01:40:00.000000000', '2020-01-01T01:50:00.000000000', '2020-01-01T02:00:00.000000000', '2020-01-01T02:10:00.000000000', '2020-01-01T02:20:00.000000000', '2020-01-01T02:30:00.000000000', '2020-01-01T02:40:00.000000000', '2020-01-01T02:50:00.000000000', '2020-01-01T03:00:00.000000000', '2020-01-01T03:10:00.000000000', '2020-01-01T03:20:00.000000000', '2020-01-01T03:30:00.000000000', '2020-01-01T03:40:00.000000000', '2020-01-01T03:50:00.000000000', '2020-01-01T04:00:00.000000000', '2020-01-01T04:10:00.000000000', '2020-01-01T04:20:00.000000000', '2020-01-01T04:30:00.000000000', '2020-01-01T04:40:00.000000000', '2020-01-01T04:50:00.000000000', '2020-01-01T05:00:00.000000000', '2020-01-01T05:10:00.000000000', '2020-01-01T05:20:00.000000000', '2020-01-01T05:30:00.000000000', '2020-01-01T05:40:00.000000000', '2020-01-01T05:50:00.000000000', '2020-01-01T06:00:00.000000000', '2020-01-01T06:10:00.000000000', '2020-01-01T06:20:00.000000000', '2020-01-01T06:30:00.000000000', '2020-01-01T06:40:00.000000000', '2020-01-01T06:50:00.000000000', '2020-01-01T07:00:00.000000000', '2020-01-01T07:10:00.000000000', '2020-01-01T07:20:00.000000000', '2020-01-01T07:30:00.000000000', '2020-01-01T07:40:00.000000000', '2020-01-01T07:50:00.000000000', '2020-01-01T08:00:00.000000000', '2020-01-01T08:10:00.000000000', '2020-01-01T08:20:00.000000000', '2020-01-01T08:30:00.000000000', '2020-01-01T08:40:00.000000000', '2020-01-01T08:50:00.000000000', '2020-01-01T09:00:00.000000000', '2020-01-01T09:10:00.000000000', '2020-01-01T09:20:00.000000000', '2020-01-01T09:30:00.000000000', '2020-01-01T09:40:00.000000000', '2020-01-01T09:50:00.000000000', '2020-01-01T10:00:00.000000000', '2020-01-01T10:10:00.000000000', '2020-01-01T10:20:00.000000000', '2020-01-01T10:30:00.000000000', '2020-01-01T10:40:00.000000000', '2020-01-01T10:50:00.000000000', '2020-01-01T11:00:00.000000000', '2020-01-01T11:10:00.000000000', '2020-01-01T11:20:00.000000000', '2020-01-01T11:30:00.000000000', '2020-01-01T11:40:00.000000000', '2020-01-01T11:50:00.000000000', '2020-01-01T12:00:00.000000000', '2020-01-01T12:10:00.000000000', '2020-01-01T12:20:00.000000000', '2020-01-01T12:30:00.000000000', '2020-01-01T12:40:00.000000000', '2020-01-01T12:50:00.000000000', '2020-01-01T13:00:00.000000000', '2020-01-01T13:10:00.000000000', '2020-01-01T13:20:00.000000000', '2020-01-01T13:30:00.000000000', '2020-01-01T13:40:00.000000000', '2020-01-01T13:50:00.000000000', '2020-01-01T14:00:00.000000000', '2020-01-01T14:10:00.000000000', '2020-01-01T14:20:00.000000000', '2020-01-01T14:30:00.000000000', '2020-01-01T14:40:00.000000000', '2020-01-01T14:50:00.000000000', '2020-01-01T15:00:00.000000000', '2020-01-01T15:10:00.000000000', '2020-01-01T15:20:00.000000000', '2020-01-01T15:30:00.000000000', '2020-01-01T15:40:00.000000000', '2020-01-01T15:50:00.000000000', '2020-01-01T16:00:00.000000000', '2020-01-01T16:10:00.000000000', '2020-01-01T16:20:00.000000000', '2020-01-01T16:30:00.000000000', '2020-01-01T16:40:00.000000000', '2020-01-01T16:50:00.000000000', '2020-01-01T17:00:00.000000000', '2020-01-01T17:10:00.000000000', '2020-01-01T17:20:00.000000000', '2020-01-01T17:30:00.000000000', '2020-01-01T17:40:00.000000000', '2020-01-01T17:50:00.000000000', '2020-01-01T18:00:00.000000000', '2020-01-01T18:10:00.000000000', '2020-01-01T18:20:00.000000000', '2020-01-01T18:30:00.000000000', '2020-01-01T18:40:00.000000000', '2020-01-01T18:50:00.000000000', '2020-01-01T19:00:00.000000000', '2020-01-01T19:10:00.000000000', '2020-01-01T19:20:00.000000000', '2020-01-01T19:30:00.000000000', '2020-01-01T19:40:00.000000000', '2020-01-01T19:50:00.000000000', '2020-01-01T20:00:00.000000000', '2020-01-01T20:10:00.000000000', '2020-01-01T20:20:00.000000000', '2020-01-01T20:30:00.000000000', '2020-01-01T20:40:00.000000000', '2020-01-01T20:50:00.000000000', '2020-01-01T21:00:00.000000000', '2020-01-01T21:10:00.000000000', '2020-01-01T21:20:00.000000000', '2020-01-01T21:30:00.000000000', '2020-01-01T21:40:00.000000000', '2020-01-01T21:50:00.000000000', '2020-01-01T22:00:00.000000000', '2020-01-01T22:10:00.000000000', '2020-01-01T22:20:00.000000000', '2020-01-01T22:30:00.000000000', '2020-01-01T22:40:00.000000000', '2020-01-01T22:50:00.000000000', '2020-01-01T23:00:00.000000000', '2020-01-01T23:10:00.000000000', '2020-01-01T23:20:00.000000000', '2020-01-01T23:30:00.000000000', '2020-01-01T23:40:00.000000000', '2020-01-01T23:50:00.000000000', '2020-01-02T00:00:00.000000000', '2020-01-02T00:10:00.000000000', '2020-01-02T00:20:00.000000000', '2020-01-02T00:30:00.000000000', '2020-01-02T00:40:00.000000000', '2020-01-02T00:50:00.000000000', '2020-01-02T01:00:00.000000000', '2020-01-02T01:10:00.000000000', '2020-01-02T01:20:00.000000000', '2020-01-02T01:30:00.000000000', '2020-01-02T01:40:00.000000000', '2020-01-02T01:50:00.000000000', '2020-01-02T02:00:00.000000000', '2020-01-02T02:10:00.000000000', '2020-01-02T02:20:00.000000000', '2020-01-02T02:30:00.000000000', '2020-01-02T02:40:00.000000000', '2020-01-02T02:50:00.000000000', '2020-01-02T03:00:00.000000000', '2020-01-02T03:10:00.000000000', '2020-01-02T03:20:00.000000000', '2020-01-02T03:30:00.000000000', '2020-01-02T03:40:00.000000000', '2020-01-02T03:50:00.000000000', '2020-01-02T04:00:00.000000000', '2020-01-02T04:10:00.000000000', '2020-01-02T04:20:00.000000000', '2020-01-02T04:30:00.000000000', '2020-01-02T04:40:00.000000000', '2020-01-02T04:50:00.000000000', '2020-01-02T05:00:00.000000000', '2020-01-02T05:10:00.000000000', '2020-01-02T05:20:00.000000000', '2020-01-02T05:30:00.000000000', '2020-01-02T05:40:00.000000000', '2020-01-02T05:50:00.000000000', '2020-01-02T06:00:00.000000000', '2020-01-02T06:10:00.000000000', '2020-01-02T06:20:00.000000000', '2020-01-02T06:30:00.000000000', '2020-01-02T06:40:00.000000000', '2020-01-02T06:50:00.000000000', '2020-01-02T07:00:00.000000000', '2020-01-02T07:10:00.000000000', '2020-01-02T07:20:00.000000000', '2020-01-02T07:30:00.000000000', '2020-01-02T07:40:00.000000000', '2020-01-02T07:50:00.000000000', '2020-01-02T08:00:00.000000000', '2020-01-02T08:10:00.000000000', '2020-01-02T08:20:00.000000000', '2020-01-02T08:30:00.000000000', '2020-01-02T08:40:00.000000000', '2020-01-02T08:50:00.000000000', '2020-01-02T09:00:00.000000000', '2020-01-02T09:10:00.000000000'], dtype='datetime64[ns]') - height(height)int640 200 400 600 ... 9400 9600 9800

array([ 0, 200, 400, 600, 800, 1000, 1200, 1400, 1600, 1800, 2000, 2200, 2400, 2600, 2800, 3000, 3200, 3400, 3600, 3800, 4000, 4200, 4400, 4600, 4800, 5000, 5200, 5400, 5600, 5800, 6000, 6200, 6400, 6600, 6800, 7000, 7200, 7400, 7600, 7800, 8000, 8200, 8400, 8600, 8800, 9000, 9200, 9400, 9600, 9800])

- t(time, height, cell)float64dask.array<chunksize=(200, 50, 579), meta=np.ndarray>

Array Chunk Bytes 381.47 MiB 44.17 MiB Shape (200, 50, 5000) (200, 50, 579) Dask graph 9 chunks in 2 graph layers Data type float64 numpy.ndarray - u(time, height, cell)float64dask.array<chunksize=(200, 50, 579), meta=np.ndarray>